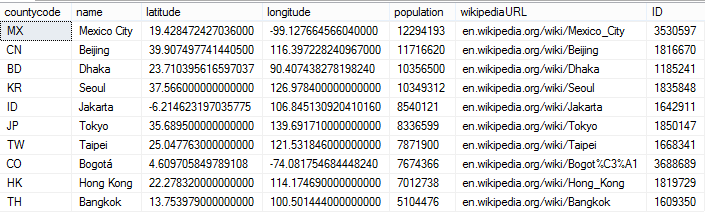

ON CONFLICT DO UPDATE SET Name=excluded.Name WHERE Name!=excluded. WITH RECURSIVE c(x) AS (VALUES(1) UNION ALL SELECT x+1 FROM c WHERE x>'appid', Value->'name' It creates a database with a table named by you in the arguments when executing and adds columns to it based on the dictionnary's keys in the JSON file. I generated a sample database file like this. JSON to SQLite database Description This tool takes a JSON file's data and puts it inside a SQLite Database. Note that the performance on the query I was working to optimize, which isĪn production query for a clients, is about twice as fast. We are still a month away from feature-freeze You'll notice that the JSON parser is quite a bit faster. Here (temporarily - the link will be taken down at some point): I concur that there is about a 16% performance reduction in the particular But is there a way to do that without converting json data into a string value cmd CREATE TABLE IF NOT EXISTS Note (note TEXT) cursor.execute (cmd) with open (notes.json) as f: data str (json. Seems unfortunate, but I'm guessing it's because the caching doesn't get used much so it just slows the parsing down in this case?Īdmittedly I'm going to get around to moving the JSON parsing out of SQL, so it's not like it'll eventually matter either way, but I decided it was worth mentioning my findings here. Storing JSON into sqlite database in python Hello I want insert a json file into my sqlite db. Testing it (with an in-memory DB and empty table, and an on-disk DB with the real table) shows about a 20% increase in time required for the query. import pandas as pd import json import sqlite Open JSON data with open ('datasets.json') as f: data json.load (f) Create A DataFrame From the JSON Data df pd.DataFrame (data) Now we need to create a connection to our sql database. The upsert was INSERT INTO app_names SELECT Value->'appid', Value->'name' FROM json_each(?) WHERE 1 ON CONFLICT DO UPDATE SET Name=excluded.Name WHERE Name!=excluded.Name The JSON being parsed is essentially and the table is defined as CREATE TABLE app_names (AppID INTEGER PRIMARY KEY, Name TEXT). As a result, it may be less up-to-date compared to other components in your stack.I saw the new JSON changes got merged into the trunk and was hoping it might improve a big upsert I do, so I updated and gave it some tests. It's important to be aware that Optimus is currently under active development, and its last official release was in 2020. Moreover, Optimus includes processors designed to handle common real-world data types such as email addresses and URLs. I've done this now on arch and ubuntu, but I'm not sure about fapple or windoze. These accessors make various tasks much easier to perform.įor example, you can sort a DataFrame, filter it based on column values, change data using specific criteria, or narrow down operations based on certain conditions. In this post, we'll build SQLite with the new JSON extension, then build pysqlite against the json-ready SQLite. The data manipulation API in Optimus is like Pandas, but it offers more. You can load from and save back to Arrow, Parquet, Excel, various common database sources, or flat-file formats like CSV and JSON. Background ( Why this package was developed) I'm working on another Python project that requires me to store a very minimal amount of data so I decided to use SQLite as a database.

This package is developed using Python 3 with no external dependencies. Optimus can use Pandas, Dask, CUDF (and Dask + CUDF), Vaex, or Spark as its underlying data engine. A Python helper package to do SQLite CRUD operation via JSON object. Optimus is an all-in-one toolset designed to load, explore, cleanse, and write data back to various data sources. Cleaning and preparing data for DataFrame-centric projects can be one of the less enviable tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed